ClickHouse

Ship your ClickHouse Metrics via Telegraf to your Logit.io Stack

Follow the steps below to send your observability data to Logit.io

Metrics

Configure Telegraf to ship ClickHouse metrics to your Logit.io stacks.

Install Integration

Install Telegraf

This integration allows you to configure a Telegraf agent to send your metrics to Logit.io.

Choose the installation method for your operating system:

When you paste the command below into Powershell it will download the Telegraf zip file.

Once that is complete, press Enter again and the zip file will be extracted into C:\Program Files\InfluxData\telegraf\telegraf-1.34.1.

wget https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip -UseBasicParsing -OutFile telegraf-1.34.1_windows_amd64.zip

Expand-Archive .\telegraf-1.34.1_windows_amd64.zip -DestinationPath 'C:\Program Files\InfluxData\telegraf'or in Powershell 7 use:

# Download the Telegraf ZIP file

Invoke-WebRequest -Uri "https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip" `

-OutFile "telegraf-1.34.1_windows_amd64.zip" `

-UseBasicParsing

# Extract the contents of the ZIP file

Expand-Archive -Path ".\telegraf-1.34.1_windows_amd64.zip" `

-DestinationPath "C:\Program Files\InfluxData\telegraf"The default configuration file is location at:

C:\Program Files\InfluxData\telegraf\telegraf.conf

Configure Telegraf

The configuration file below is pre-configured to scrape the system metrics from your hosts, add the following code to the configuration file telegraf.conf from the previous step.

### Read metrics from one or many ClickHouse servers

[[inputs.clickhouse]]

## Username for authorization on ClickHouse server

username = "default"

## Password for authorization on ClickHouse server

# password = ""

## HTTP(s) timeout while getting metrics values

## The timeout includes connection time, any redirects, and reading the

## response body.

# timeout = 5s

## List of servers for metrics scraping

## metrics scrape via HTTP(s) clickhouse interface

## https://clickhouse.tech/docs/en/interfaces/http/

servers = ["http://127.0.0.1:8123"]

## If "auto_discovery"" is "true" plugin tries to connect to all servers

## available in the cluster with using same "user:password" described in

## "user" and "password" parameters and get this server hostname list from

## "system.clusters" table. See

## - https://clickhouse.tech/docs/en/operations/system_tables/#system-clusters

## - https://clickhouse.tech/docs/en/operations/server_settings/settings/#server_settings_remote_servers

## - https://clickhouse.tech/docs/en/operations/table_engines/distributed/

## - https://clickhouse.tech/docs/en/operations/table_engines/replication/#creating-replicated-tables

# auto_discovery = true

## Filter cluster names in "system.clusters" when "auto_discovery" is "true"

## when this filter present then "WHERE cluster IN (...)" filter will apply

## please use only full cluster names here, regexp and glob filters is not

## allowed for "/etc/clickhouse-server/config.d/remote.xml"

## <yandex>

## <remote_servers>

## <my-own-cluster>

## <shard>

## <replica><host>clickhouse-ru-1.local</host><port>9000</port></replica>

## <replica><host>clickhouse-ru-2.local</host><port>9000</port></replica>

## </shard>

## <shard>

## <replica><host>clickhouse-eu-1.local</host><port>9000</port></replica>

## <replica><host>clickhouse-eu-2.local</host><port>9000</port></replica>

## </shard>

## </my-onw-cluster>

## </remote_servers>

##

## </yandex>

##

## example: cluster_include = ["my-own-cluster"]

# cluster_include = []

## Filter cluster names in "system.clusters" when "auto_discovery" is

## "true" when this filter present then "WHERE cluster NOT IN (...)"

## filter will apply

## example: cluster_exclude = ["my-internal-not-discovered-cluster"]

# cluster_exclude = []

## Optional TLS Config

# tls_ca = "/etc/telegraf/ca.pem"

# tls_cert = "/etc/telegraf/cert.pem"

# tls_key = "/etc/telegraf/key.pem"

## Use TLS but skip chain & host verification

# insecure_skip_verify = false

### System metrics

[[inputs.disk]]

[[inputs.net]]

[[inputs.mem]]

[[inputs.system]]

[[inputs.cpu]]

percpu = false

totalcpu = true

collect_cpu_time = true

report_active = true

### Output

[[outputs.http]]

url = "https://@metricsUsername:@metricsPassword@@metrics_id-vm.logit.io:@vmAgentPort/api/v1/write"

data_format = "prometheusremotewrite"

[outputs.http.headers]

Content-Type = "application/x-protobuf"

Content-Encoding = "snappy"Read more about how to configure data scraping and configuration options for ClickHouse (opens in a new tab)

Start Telegraf

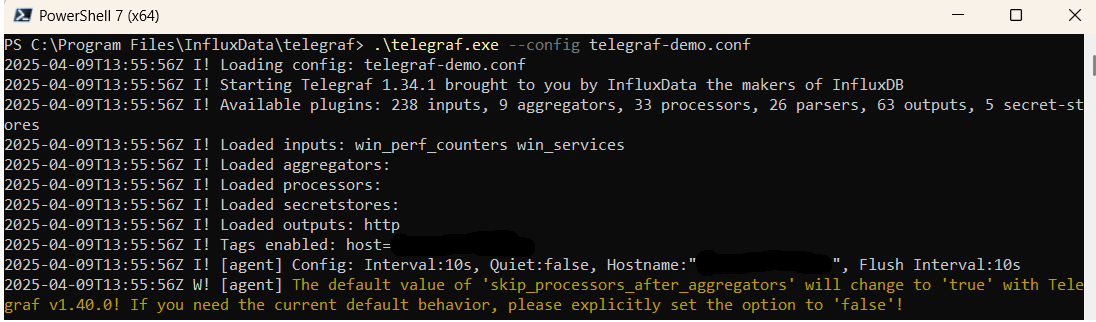

From the location where Telegraf was installed (C:\Program Files\InfluxData\telegraf\telegraf-1.34.1) run the program

providing the chosen configuration file as a parameter:

.\telegraf.exe --config telegraf.confOnce Telegraf is running you should see output similar to the following, which confirms the inputs, output and basic configuration the application has been started with:

Launch Grafana to View Your Data

Launch GrafanaHow to diagnose no data in Stack

If you don't see data appearing in your stack after following this integration, take a look at the troubleshooting guide for steps to diagnose and resolve the problem or contact our support team and we'll be happy to assist.

Telegraf ClickHouse metrics Overview

In order to effectively monitor and analyze ClickHouse metrics in a distributed environment, having a reliable and efficient metrics management solution is key. Telegraf, an open-source agent known for collecting and sending metrics, is a perfect tool for gathering ClickHouse metrics from numerous sources, such as operational ClickHouse databases, other related applications, and the underlying infrastructure.

Telegraf boasts an array of input plugins, enabling users to collect metrics from diverse sources like CPU usage, memory consumption, network activity, and many more. For storing and querying these amassed metrics, organizations can employ Prometheus, an open-source system that offers both monitoring and alerting capabilities. This tool supports a flexible querying language and has strong graphical data visualization features.

To ship ClickHouse metrics from Telegraf to Prometheus, organizations need to configure Telegraf to output metrics in the Prometheus format. Then, they can use Prometheus to scrape these metrics from the Telegraf server. This process involves setting up Telegraf to collect ClickHouse metrics and output them in the Prometheus format, configuring Prometheus to retrieve these metrics from the Telegraf server, and then visualizing and analyzing the data using Prometheus's versatile querying and visualization capabilities.

Once the metrics are in Prometheus, further analysis and visualization can be performed using Grafana. Grafana is an open-source platform that's perfect for monitoring and observability and is entirely compatible with Prometheus. It provides users with the tools to create dynamic and interactive dashboards, enabling them to dig deeper into the metrics data.

If you need any further assistance with shipping your log data to Logit.io we're here to help you get started. Feel free to get in contact with our support team by sending us a message via live chat & we'll be happy to assist.