Google BigQuery BI Engine Metrics

Ship your Google BigQuery BI Engine Metrics via Telegraf to your Logit.io Stack

Follow the steps below to send your observability data to Logit.io

Metrics

Configure Telegraf to ship Google BigQuery BI Engine metrics to your Logit.io stacks via Logstash.

Install Integration

Set Credentials in GCP

@intro

-

Begin by heading over to the 'Project Selector' (opens in a new tab) and select the specific project from which you wish to send metrics.

- Progress to the 'Service Account Details' screen. Here, assign a distinct name to your service account and opt for 'Create and Continue'.

- In the 'Grant This Service Account Access to Project' screen, ensure the following roles: 'Compute Viewer', 'Monitoring Viewer', and 'Cloud Asset Viewer'.

- Upon completion of the above, click 'Done'.

- Now find and select your project in the 'Service Accounts for Project' list.

- Move to the 'KEYS' section.

- Navigate through Keys > Add Key > Create New Key, and specify 'JSON' as the key type.

- Lastly, click on 'Create', and make sure to save your new key.

Now add the environment variable for the key

On the machine run:

export GOOGLE_APPLICATION_CREDENTIALS=<your-gcp-key>

Install Telegraf

This integration allows you to configure a Telegraf agent to send your metrics to Logit.io.

Choose the installation method for your operating system:

When you paste the command below into Powershell it will download the Telegraf zip file.

Once that is complete, press Enter again and the zip file will be extracted into C:\Program Files\InfluxData\telegraf\telegraf-1.34.1.

wget https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip -UseBasicParsing -OutFile telegraf-1.34.1_windows_amd64.zip

Expand-Archive .\telegraf-1.34.1_windows_amd64.zip -DestinationPath 'C:\Program Files\InfluxData\telegraf'or in Powershell 7 use:

# Download the Telegraf ZIP file

Invoke-WebRequest -Uri "https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip" `

-OutFile "telegraf-1.34.1_windows_amd64.zip" `

-UseBasicParsing

# Extract the contents of the ZIP file

Expand-Archive -Path ".\telegraf-1.34.1_windows_amd64.zip" `

-DestinationPath "C:\Program Files\InfluxData\telegraf"The default configuration file is location at:

C:\Program Files\InfluxData\telegraf\telegraf.conf

Configure the Telegraf input plugin

First you need to set up the input plug-in to enable Telegraf to scrape the GCP data from your hosts. This can be accomplished by incorporating the following code into your configuration file:

# Gather timeseries from Google Cloud Platform v3 monitoring API

[[inputs.stackdriver]]

## GCP Project

project = "<your-project-name>"

## Include timeseries that start with the given metric type.

metric_type_prefix_include = [

"@metric_type",

]

## Most metrics are updated no more than once per minute; it is recommended

## to override the agent level interval with a value of 1m or greater.

interval = "1m"Read more about how to configure data scraping and configuration options for Stackdriver (opens in a new tab)

Configure the output plugin

Once you have generated the configuration file, you need to set up the output plug-in to allow Telegraf to transmit your data to Logit.io in Prometheus format. This can be accomplished by incorporating the following code into your configuration file:

[[outputs.http]]

url = "https://@metricsUsername:@metricsPassword@@metrics_id-vm.logit.io:@vmAgentPort/api/v1/write"

data_format = "prometheusremotewrite"

[outputs.http.headers]

Content-Type = "application/x-protobuf"

Content-Encoding = "snappy"Start Telegraf

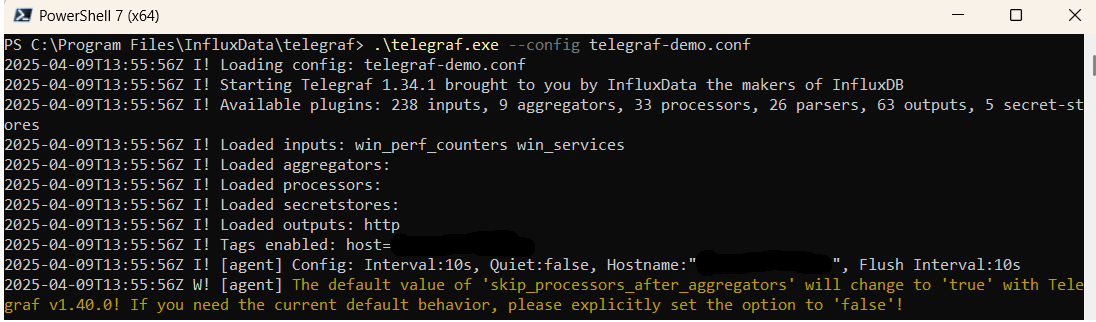

From the location where Telegraf was installed (C:\Program Files\InfluxData\telegraf\telegraf-1.34.1) run the program

providing the chosen configuration file as a parameter:

.\telegraf.exe --config telegraf.confOnce Telegraf is running you should see output similar to the following, which confirms the inputs, output and basic configuration the application has been started with:

Launch Grafana to View Your Data

Launch GrafanaHow to diagnose no data in Stack

If you don't see data appearing in your stack after following this integration, take a look at the troubleshooting guide for steps to diagnose and resolve the problem or contact our support team and we'll be happy to assist.

Telegraf Google Google Big Query BI metrics Overview

For efficient monitoring and insightful examination of Google BI Engine metrics across distributed systems, the indispensability of a robust and capable metrics management solution is considerable. A fitting option for this role is Telegraf, an open-source server agent known for its ability to gather and report metrics from Google BI Engine and a myriad of other sources, such as active system instances, databases, and corresponding applications.

Telegraf possesses an extensive range of input plugins, allowing users to collect important metrics such as query counts, latency, error rates, and more. These metrics are crucial for understanding the performance of the Google BI Engine. For storing and analyzing these gathered metrics, organizations can employ Prometheus, a highly respected open-source monitoring and alerting toolkit, acclaimed for its adaptable query language and strong data visualization capabilities.

Transmitting Google BI Engine metrics from Telegraf to Prometheus involves setting up Telegraf to produce metrics in Prometheus's format, followed by arranging Prometheus to scrape these metrics from the Telegraf server.

Once the metrics are successfully integrated into Prometheus, further comprehensive analysis and visualization can be carried out using Grafana. Grafana, a prime open-source platform renowned for its monitoring and observability features, integrates effortlessly with Prometheus. It allows users to create dynamic, interactive dashboards for an in-depth look into the metrics data, providing a thorough understanding of performance patterns and potential issues within Google BI Engine.

If you need any further assistance with shipping your log data to Logit.io we're here to help you get started. Feel free to get in contact with our support team by sending us a message via live chat & we'll be happy to assist.