Google Cloud TPU Metrics

Ship your Google Cloud TPU Metrics via Telegraf to your Logit.io Stack

Follow the steps below to send your observability data to Logit.io

Metrics

Configure Telegraf to ship Google Cloud TPU metrics to your Logit.io stacks via Logstash.

Install Integration

Set Credentials in GCP

@intro

-

Begin by heading over to the 'Project Selector' (opens in a new tab) and select the specific project from which you wish to send metrics.

- Progress to the 'Service Account Details' screen. Here, assign a distinct name to your service account and opt for 'Create and Continue'.

- In the 'Grant This Service Account Access to Project' screen, ensure the following roles: 'Compute Viewer', 'Monitoring Viewer', and 'Cloud Asset Viewer'.

- Upon completion of the above, click 'Done'.

- Now find and select your project in the 'Service Accounts for Project' list.

- Move to the 'KEYS' section.

- Navigate through Keys > Add Key > Create New Key, and specify 'JSON' as the key type.

- Lastly, click on 'Create', and make sure to save your new key.

Now add the environment variable for the key

On the machine run:

export GOOGLE_APPLICATION_CREDENTIALS=<your-gcp-key>

Install Telegraf

This integration allows you to configure a Telegraf agent to send your metrics to Logit.io.

Choose the installation method for your operating system:

When you paste the command below into Powershell it will download the Telegraf zip file.

Once that is complete, press Enter again and the zip file will be extracted into C:\Program Files\InfluxData\telegraf\telegraf-1.34.1.

wget https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip -UseBasicParsing -OutFile telegraf-1.34.1_windows_amd64.zip

Expand-Archive .\telegraf-1.34.1_windows_amd64.zip -DestinationPath 'C:\Program Files\InfluxData\telegraf'or in Powershell 7 use:

# Download the Telegraf ZIP file

Invoke-WebRequest -Uri "https://dl.influxdata.com/telegraf/releases/telegraf-1.34.1_windows_amd64.zip" `

-OutFile "telegraf-1.34.1_windows_amd64.zip" `

-UseBasicParsing

# Extract the contents of the ZIP file

Expand-Archive -Path ".\telegraf-1.34.1_windows_amd64.zip" `

-DestinationPath "C:\Program Files\InfluxData\telegraf"The default configuration file is location at:

C:\Program Files\InfluxData\telegraf\telegraf.conf

Configure the Telegraf input plugin

First you need to set up the input plug-in to enable Telegraf to scrape the GCP data from your hosts. This can be accomplished by incorporating the following code into your configuration file:

# Gather timeseries from Google Cloud Platform v3 monitoring API

[[inputs.stackdriver]]

## GCP Project

project = "<your-project-name>"

## Include timeseries that start with the given metric type.

metric_type_prefix_include = [

"@metric_type",

]

## Most metrics are updated no more than once per minute; it is recommended

## to override the agent level interval with a value of 1m or greater.

interval = "1m"Read more about how to configure data scraping and configuration options for Stackdriver (opens in a new tab)

Configure the output plugin

Once you have generated the configuration file, you need to set up the output plug-in to allow Telegraf to transmit your data to Logit.io in Prometheus format. This can be accomplished by incorporating the following code into your configuration file:

[[outputs.http]]

url = "https://@metricsUsername:@metricsPassword@@metrics_id-vm.logit.io:@vmAgentPort/api/v1/write"

data_format = "prometheusremotewrite"

[outputs.http.headers]

Content-Type = "application/x-protobuf"

Content-Encoding = "snappy"Start Telegraf

From the location where Telegraf was installed (C:\Program Files\InfluxData\telegraf\telegraf-1.34.1) run the program

providing the chosen configuration file as a parameter:

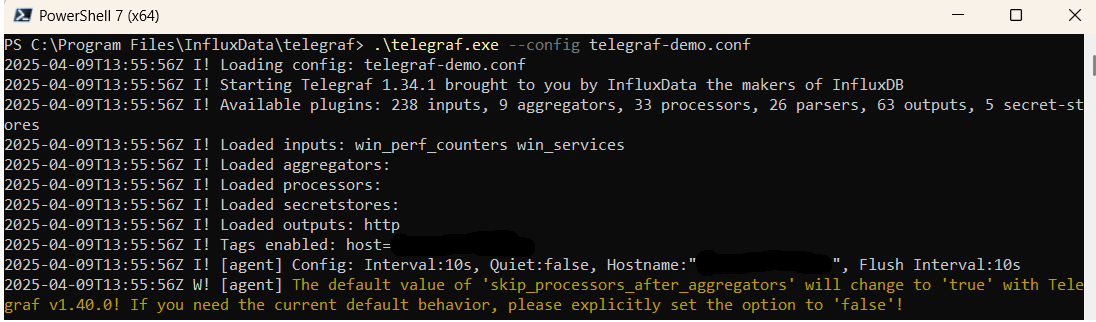

.\telegraf.exe --config telegraf.confOnce Telegraf is running you should see output similar to the following, which confirms the inputs, output and basic configuration the application has been started with:

Launch Grafana to View Your Data

Launch GrafanaHow to diagnose no data in Stack

If you don't see data appearing in your stack after following this integration, take a look at the troubleshooting guide for steps to diagnose and resolve the problem or contact our support team and we'll be happy to assist.

Telegraf Google TPU Platform metrics Overview

Telegraf, the comprehensive open-source server agent created by InfluxData, is adept at collecting metrics and data from a vast array of sources, including specialized computing resources. Google Cloud TPUs are custom-developed hardware accelerators designed to significantly speed up and scale up specific machine learning (ML) workloads programmed with TensorFlow. These processors are optimized to deliver high performance for both training and inference across a range of ML models, enabling researchers, developers, and businesses to accelerate their machine learning applications more efficiently than with general-purpose GPU and CPU computing.

Integrating Telegraf with Google Cloud TPUs allows organizations to monitor the performance and utilization of these powerful resources in real-time. This is crucial for optimizing machine learning workflows, ensuring efficient use of TPU resources, and reducing computational costs. By collecting metrics such as operation per second, processing time, and resource utilization, teams can gain insights into their TPU performance, identify bottlenecks in their ML pipelines, and make informed decisions to enhance model training and inference processes.

However, the complexity and volume of data generated by TPUs, combined with the critical nature of ML workloads, necessitate a robust platform for data analysis and visualization. Logit.io offers a comprehensive solution, providing an advanced analytics platform that simplifies the processing, visualization, and analysis of metrics from Telegraf and Google Cloud TPUs.

With Logit.io, organizations can enhance their monitoring and analytics capabilities for machine learning projects utilizing Google Cloud TPUs, enabling them to maximize resource utilization, improve model accuracy, and accelerate time to insights. The platform's advanced features support proactive management of ML workflows, helping to ensure the successful deployment of machine learning applications at scale.

For those leveraging Telegraf in conjunction with Google Cloud TPUs and seeking to improve their ML infrastructure monitoring and analytics, Logit.io provides the essential tools and expertise. Our platform facilitates effective management of complex ML metrics, allowing organizations to derive actionable insights and achieve their machine learning objectives. With Google Cloud TPUs serving as powerful accelerators for AI and ML tasks, it's crucial to have a robust log management and analysis solution in place. Google Storage Transfer Metrics can be a pivotal component in this process. By utilizing the integration between Logit.io and Google Cloud integrations, you can keep track of the data transfer metrics with precision. In addition to TPUs, Logit.io's integration also extends to Google App Engine , offering a holistic solution for monitoring and managing your applications running on this serverless platform. By connecting your App Engine instances to Logit.io, you can obtain real-time insights into application performance, error tracking, and resource utilization.